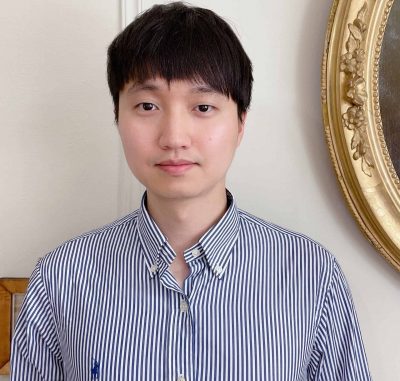

Dong Young Lim

The aim of this project is to (i) develop high-performance machine learning (ML) and other data-driven algorithms for solving large-scale optimization problems, (ii) address theoretical issues such as feasibility, convergence, and computational efficiency, and (iii) apply and test the algorithms to problems arisen in finance and banking.

The project focuses on developing a novel class of adaptive stochastic optimization algorithms, which overcomes the known limitations of popular adaptive optimizers including ADAM, AMSgrad, and RMSprop. The main idea is to unify a taming technique for stochastic differential equations with monotone coefficients and the theory of Langevin dynamics. Furthermore, a boosting function is designed to accelerate the training speed of the newly proposed algorithm. The project will provide a global convergence analysis and examine the performance via a variety of neural network models on real-world datasets. The outputs of this project will offer an interesting insight into challenging issues in training neural networks.

This TRAIN@Ed project has received funding from the DDI programme and the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie grant agreement No 801215.

Our Women in Data campaign

Samantha Rhynas

Head of Data at Effini & Girl Geek Scotland Leadership Team & PyData Edinburgh Organiser